|

Listen to this article

|

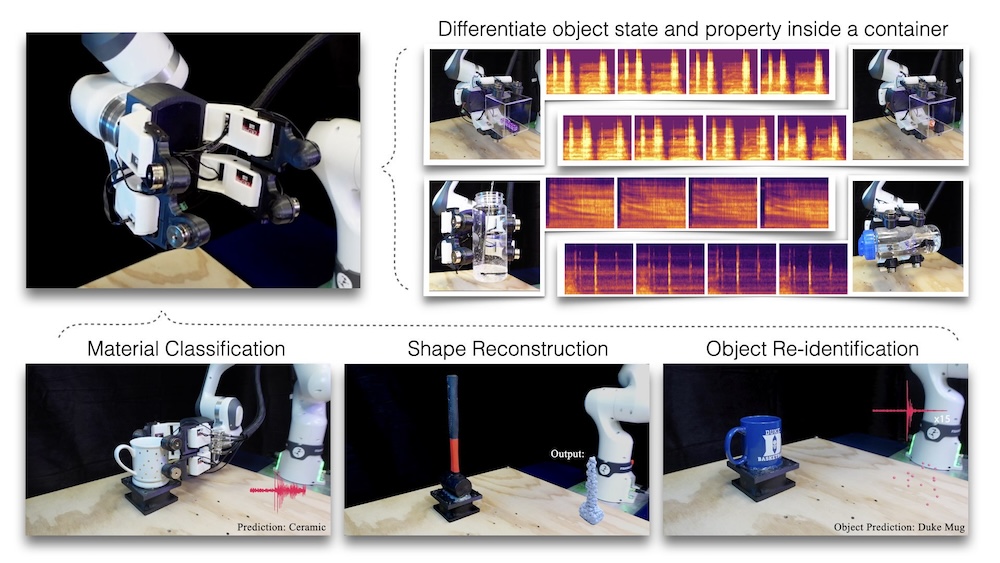

Researchers at Duke University have developed a system called SonicSense that gives robots a sense of touch by “listening” to vibrations. The researchers said this allows the robots to identify materials, understand shapes and recognize objects.

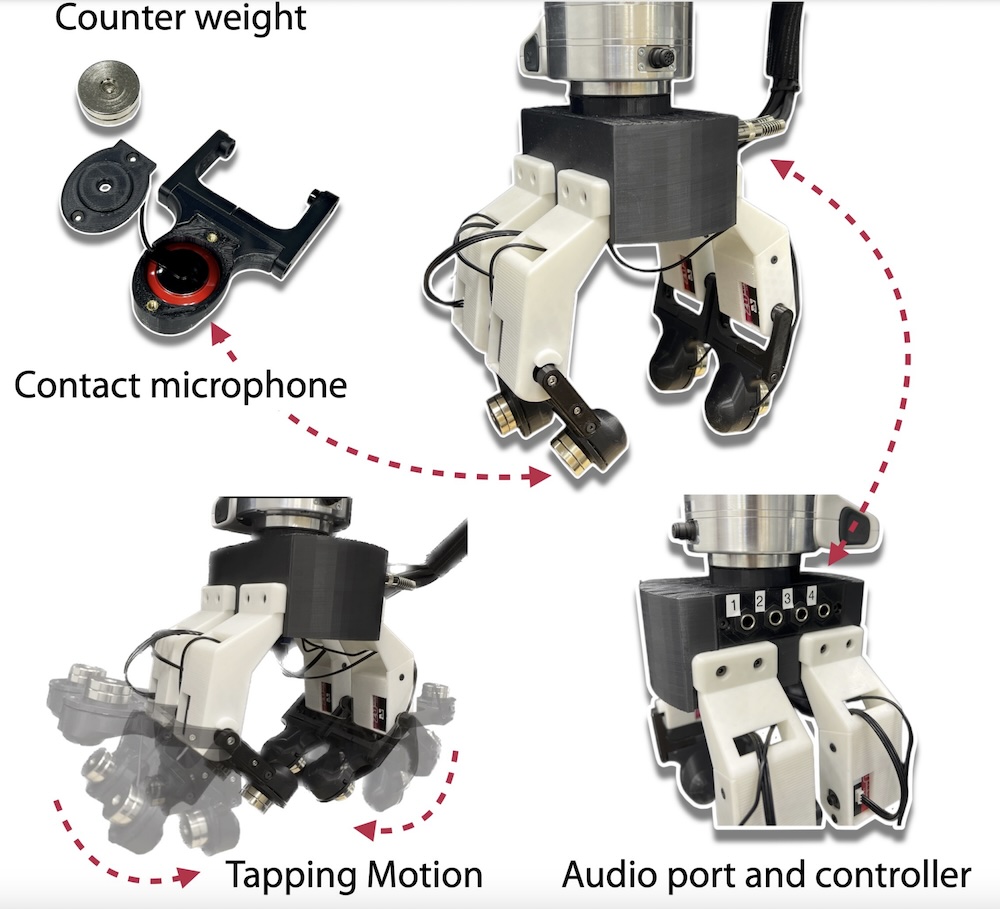

SonicSense is a four-fingered robotic hand that has a contact microphone embedded in each fingertip. These sensors detect and record vibrations generated when the robot taps, grasps or shakes an object. And because the microphones are in contact with the object, it allows the robot to tune out ambient noises.

“Robots today mostly rely on vision to interpret the world,” explained Jiaxun Liu, lead author of the paper and a first-year Ph.D. student in the laboratory of Boyuan Chen, professor of mechanical engineering and materials science at Duke. “We wanted to create a solution that could work with complex and diverse objects found on a daily basis, giving robots a much richer ability to ‘feel’ and understand the world.”

Based on the interactions and detected signals, SonicSense extracts frequency features and uses its previous knowledge, paired with recent advancements in AI, to figure out what material the object is made out of and its 3D shape. The researchers said if it’s an object the system has never seen before, it might take 20 different interactions for the system to come to a conclusion. But if it’s an object already in its database, it can correctly identify it in as little as four.

“SonicSense gives robots a new way to hear and feel, much like humans, which can transform how current robots perceive and interact with objects,” said Chen, who also has appointments and students from electrical and computer engineering and computer science. “While vision is essential, sound adds layers of information that can reveal things the eye might miss.”

Chen and his laboratory showcase a number of capabilities enabled by SonicSense. By turning or shaking a box filled with dice, it can count the number held within as well as their shape. By doing the same with a bottle of water, it can tell how much liquid is contained inside. And by tapping around the outside of an object, much like how humans explore objects in the dark, it can build a 3D reconstruction of the object’s shape and determine what material it’s made from.

“While most datasets are collected in controlled lab settings or with human intervention, we needed our robot to interact with objects independently in an open lab environment,” said Liu. “It’s difficult to replicate that level of complexity in simulations. This gap between controlled and real-world data is critical, and SonicSense bridges that by enabling robots to interact directly with the diverse, messy realities of the physical world.”

The team said these abilities make SonicSense a robust foundation for training robots to perceive objects in dynamic, unstructured environments. So does its cost; using the same contact microphones that musicians use to record sound from guitars, 3D printing and other commercially available components keeps the construction costs to just over $200, according to Duke University.

The researchers are working to enhance the system’s ability to interact with multiple objects. By integrating object-tracking algorithms, robots will be able to handle dynamic, cluttered environments — bringing them closer to human-like adaptability in real-world tasks.

Another key development lies in the design of the robot hand itself. “This is only the beginning. In the future, we envision SonicSense being used in more advanced robotic hands with dexterous manipulation skills, allowing robots to perform tasks that require a nuanced sense of touch,” Chen said. “We’re excited to explore how this technology can be further developed to integrate multiple sensory modalities, such as pressure and temperature, for even more complex interactions.”

The SonicSense robot hand includes four fingers where each fingertip is equipped with one contact microphone. | Credit: Duke University

Tell Us What You Think!